Generative AI is the new hot stuff. And its more easy than you presume. No need to spend billions on the hyperscalers profits. For the sake of ease as a first approach I will start here with a Linux based nevertheless Windows constellation.

This is a fundamental good approach because this relieves you from data privacy issues and helps with much more control over your private data. Predominantly without loss of quality because some of the included models come from large organizations with substantial funds. And there is not even a lack of performance or service integrity:

You may integrate large language models, image generation (never write an article or give an talk on AI without kittens – these up there are NOT real) and even integrate this as chatbots in your personal tools.

But to start:

Install a general available Linux platform – focus here on Debian (Ubuntu should work just as well) and install it in your local WSL – Windows Subsytem on Linux Environment. Most of this would apply to server installations, but I would spend some more effort to make this nice and shiny – and this should be an easy to use quick approach.

WSL Installation

Ensure Windows Terminal app is installed on your Windows PC – This is not CMD !.

Beyond that the installation of an local LLM is encouraged because it is easy to interact with windows desktop tools, which then may provide an network independent and customized chatbot.

Enter Terminal on Power Shell and enter

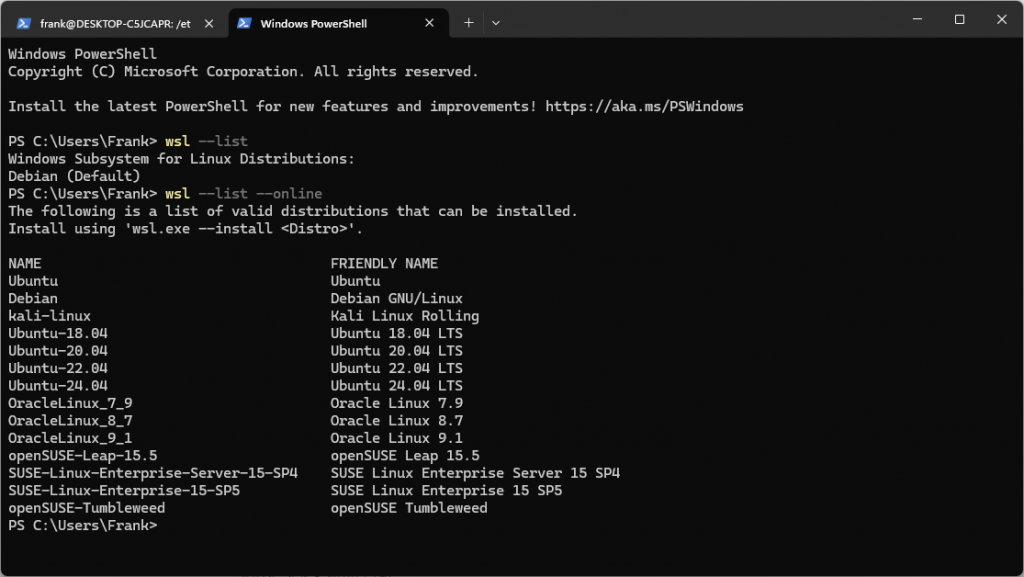

wsl --install -d DebianAdditional distributions might be available and may be retrieved likewise. An overview you get is with :

PS C:\Users\f.benke> wsl --list --online

I prefer then to set the default version of the started WSL container to the application specific one. Continue reading